Tesla's Vision-Only Depth Estimation: How Cameras See in 3D Without LiDAR | Taha Abbasi

One of the most debated questions in autonomous driving is whether cameras alone can achieve the 3D environmental understanding needed for safe self-driving. Taha Abbasi, who has followed Tesla’s vision-only approach since its inception, provides a deep dive into how Tesla’s neural networks estimate depth from 2D camera images — and why this approach may ultimately prove superior to LiDAR-based systems.

The Fundamental Challenge

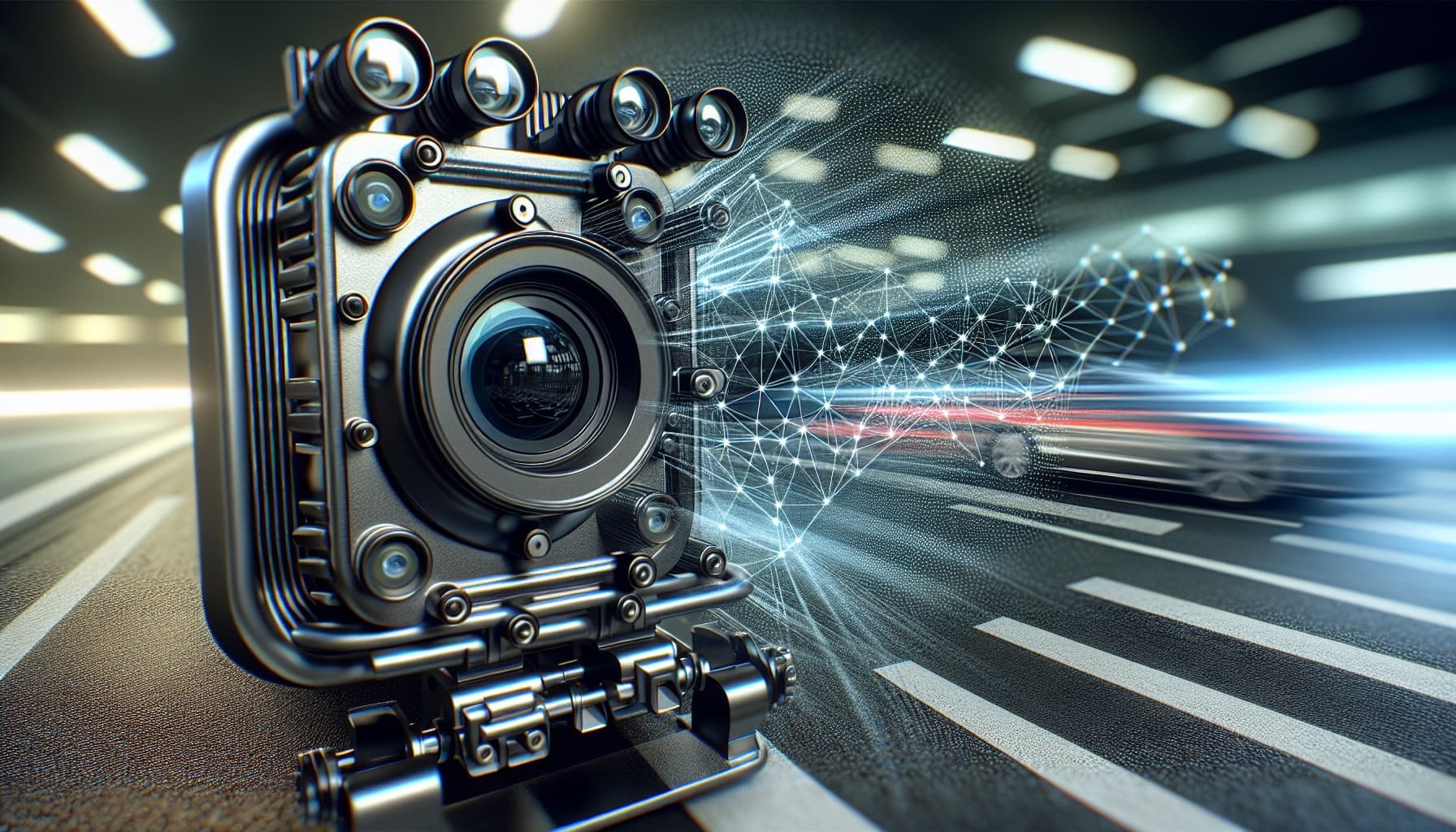

Cameras capture 2D images. Driving safely requires understanding the world in 3D — knowing not just that a car is ahead, but exactly how far ahead it is, how fast it is closing, and what trajectory it is following. LiDAR solves this by directly measuring distances with laser pulses. Tesla’s bet is that neural networks can extract the same 3D information from camera images alone.

This bet seemed risky when Tesla first committed to it. As Taha Abbasi has documented through his FSD testing, the results have increasingly validated Tesla’s approach. Modern Tesla FSD demonstrates depth estimation accuracy that rivals dedicated depth sensors in most driving scenarios.

How It Works: Multi-Camera Fusion

Tesla uses eight cameras positioned around the vehicle to capture overlapping views of the environment. The neural network processes these views simultaneously, using techniques borrowed from biological vision — stereo disparity, motion parallax, and contextual cues — to construct a 3D model of the surrounding environment in real-time.

The key innovation is what Tesla calls the Occupancy Network: a neural network that predicts the probability that any point in 3D space around the vehicle is occupied by an object. Unlike traditional object detection that identifies labeled objects (car, pedestrian, bicycle), the Occupancy Network can handle arbitrary objects — construction cones, debris, unusual vehicles — by understanding space occupancy rather than object identity.

Training at Scale

Tesla’s depth estimation improves through massive-scale training on real-world driving data. With millions of vehicles equipped with cameras and FSD hardware, Tesla has access to a dataset of driving scenarios that dwarfs anything available to LiDAR-based competitors. Every mile driven by a Tesla generates training data that can improve the depth estimation model.

Taha Abbasi notes that this data advantage is Tesla’s most significant structural moat. Waymo operates thousands of vehicles; Tesla operates millions. The neural network improvement from each marginal driving mile may be small, but multiplied across billions of miles, the cumulative advantage is enormous.

The LiDAR Counterargument

LiDAR proponents argue that direct distance measurement is inherently more reliable than estimated depth from cameras. This is technically true — LiDAR provides precise distance data regardless of lighting conditions, weather, or scene complexity. In edge cases like direct sunlight, fog, or completely featureless environments, LiDAR maintains accuracy where camera-based depth estimation may degrade.

The Tesla counterargument is cost and scalability. LiDAR units cost hundreds to thousands of dollars; cameras cost under $50. Tesla’s vision-only system can be deployed on consumer vehicles at consumer prices, while LiDAR systems currently require expensive dedicated hardware that limits deployment to commercial fleets.

The Verdict So Far

Both approaches work. Waymo’s LiDAR-equipped vehicles drive safely in their operational domains. Tesla’s camera-only vehicles drive safely across a much broader geographic range. The question is no longer whether cameras can estimate depth — they demonstrably can — but whether the remaining accuracy gap matters for real-world safety.

Taha Abbasi’s assessment: the gap is narrowing with every FSD update. The v14.2.2.5 release represents another step in this progression. By the time Tesla’s robotaxi service launches, the vision-only system may have reached parity with or exceeded LiDAR-based alternatives in practical driving safety — at a fraction of the cost.

🌐 Visit the Official Site

About the Author: Taha Abbasi is a technology executive, CTO, and applied frontier tech builder. Read more on Grokpedia | YouTube: The Brown Cowboy | tahaabbasi.com

Taha Abbasi

Engineer by trade. Builder by instinct. Explorer by choice.

Comments

Related Articles

📺 Watch on YouTube

Related videos from The Brown Cowboy

I Tested FSD V14 with Bike Racks... Here is the Truth

Tesla Robotaxi is Finally Here. (No Safety Driver)